#1839 AI and Diabetes Care with Dr. Sarah Gebauer

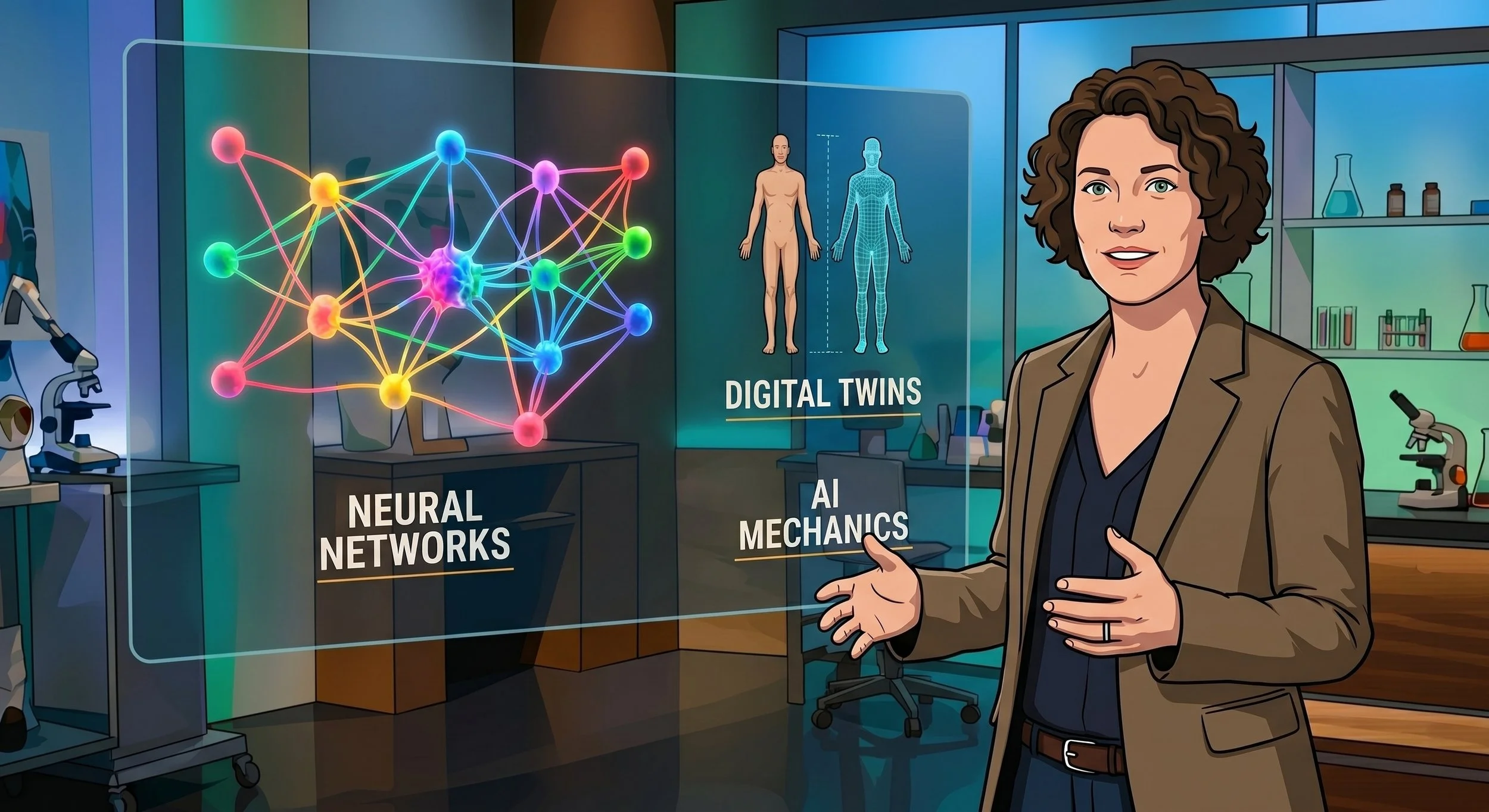

Dr. Sarah Gebauer explains the mechanics of artificial intelligence in healthcare. We discuss neural networks, digital twins, FDA regulatory challenges, and practical ways to use AI for everyday tasks.

Companies that Support Juicebox

Key Takeaways

- The Promise of Digital Twins: Artificial Intelligence is paving the way for "digital twins," allowing medical professionals to test treatments and algorithms on a digital representation of your data before applying them to your actual body.

- AI as an Empowering Tool: Large Language Models (LLMs) and AI agents democratize technology, enabling individuals with no coding background to create powerful websites, apps, and diabetes management tools simply by using natural language.

- Regulatory Challenges with Medical AI: Because generative AI is probabilistic (producing the most likely answer rather than a guaranteed deterministic outcome), regulatory bodies like the FDA struggle to approve constantly adapting, individualized diabetes algorithms.

- Responsible Health Advocacy: While groundbreaking trials (like the Eladon trial utilizing islet cells and Tego) show great promise for functional cures, it's vital to communicate these advancements responsibly, avoiding misleading social media hype that frames them as imminent, widespread cures.

- Prompt Engineering is a Learnable Skill: Getting the most out of AI (like Claude or Gemini) requires practice and "pre-bolusing" your tasks—giving the AI context and asking it to help refine its own instructions before generating the final output.

Resources Mentioned

- Contour Next Gen: contournext.com/juicebox

- Cozy Earth (Use code JUICE BOX for 20% off): cozyearth.com

- US Med: usmed.com/juicebox or call (888) 721-1514

- Wrong Way Recording: wrongwayrecording.com

- Juicebox Podcast & Juicebox Docs: juiceboxpodcast.com

- Juicebox Podcast Private Facebook Group (Type 1 Diabetes on Facebook)

Introduction

Scott Benner Here we are back together again, friends, for another episode of the Juice Box podcast.

Sarah Hi. (0:15) I'm Sarah Gibauer. (0:16) I'm an anesthesiologist and also the mom of a type one diabetic kid. (0:21) I get to do all kinds of cool stuff with AI, and I'm thrilled to be back here today, talking about AI stuff, which is what I do when I'm not in the operating room.

Scott Benner If this is your first time listening to the Juice Box podcast and you'd like to hear more, download Apple Podcasts or Spotify, really any audio app at all. (0:41) Look for the Juice Box podcast, and follow or subscribe. (0:44) We put out new content every day that you'll enjoy. (0:48) Wanna learn more about your diabetes management? (0:50) Go to juiceboxpodcast.com up in the menu and look for bold beginnings, the diabetes pro tip series, and much more. (0:57) This podcast is full of collections and series of information that will help you to live better with insulin. (1:06) If you're looking for community around type one diabetes, check out the Juice Box Podcast private Facebook group. (1:12) Juice Box Podcast, type one diabetes. (1:15) But everybody is welcome. (1:17) Type one, type two, gestational, loved ones, it doesn't matter to me. (1:22) If you're impacted by diabetes and you're looking for support, comfort, or community, check out Juice Box podcast, type one diabetes on Facebook. (1:31) Nothing you hear on the Juice Box podcast should be considered advice, medical or otherwise. (1:36) Always consult a physician before making any changes to your health care plan or becoming bold with insulin.

Sponsor Messages: Contour Next Gen, Cozy Earth, and US Med

Scott Benner This episode of the juice box podcast is sponsored by the Kontoor Next Gen blood glucose meter. (1:52) Learn more and get started today at kontoornext.com/juicebox. (1:59) Today's episode is also sponsored by Cozy Earth. (2:03) You can use my offer code juice box at checkout to save 20% off of your entire order at cozyearth.com. (2:11) Everything from the joggers that I'm actually wearing right now to the sheets I sleep on, the towels I use to dry myself with, and whatever else is available at cozyearth.com. (2:22) Just use the offer code juice box at checkout. (2:25) The podcast is also sponsored today by US Med. (2:29) Usmed.com/juicebox or call (888) 721-1514. (2:38) US Med is where my daughter gets her diabetes supplies from, and you could too. (2:43) Use the link or number to get your free benefits check and get started today with US Med.

Sarah's Background and New Book on Long-Term Travel

Sarah Hi. (2:50) I'm Sarah Gibauer. (2:51) I'm an anesthesiologist and also the mom of a type one diabetic kid. (2:56) I get to do all kinds of cool stuff with AI, and I've worked in hospitals, clinics, all kinds of settings, professionally, and then also gotten to interact with the health care system. (3:07) We've traveled as a family to more than 50 countries now around the world over the last four years, and I'm thrilled to be back here today, talking about AI stuff, which is what I do when I'm not in the operating room.

Scott Benner You and I recorded together already. (3:21) I'm trying to decide if your episode came out or not yet.

Sarah I don't know if it did, actually. (3:27) Well, exciting news with that also is that I have written a book on traveling long term with kids, and there is there are some sections in there on traveling with diabetes specifically. (3:37) So, yeah, I just thought it would be great to help empower some of the families that I meet to to travel long term. (3:46) You know, as I mentioned, it's we say that it's for our kids, but it's really for my husband and me because we love forcing them to spend time with us. (3:52) And I used to talk to a lot of other parents who are interested in doing something similar, but it just seems too huge and unmanageable. (3:59) So the book is really trying to break that down and to, help people feel like it's something that they can do easily.

Scott Benner I I don't know, Sarah. (4:06) I feel like you're just on here to make me feel bad. (4:08) Like, you're she she said I said before before we started, I said, sir, do you have exactly an hour? (4:14) Do we have extra time? (4:14) She says, well, I have to be in surgery later. (4:16) And then and then five seconds later, you're like, oh, I wrote a book. (4:19) You just wrote a book? (4:20) Why alright. (4:21) Let's start with that real quickly. (4:22) Why did you like, how does that happen? (4:24) How do you say to yourself, I'm gonna write a book, then you actually accomplish it? (4:27) Is it published, or is it self published, or what is it?

Sarah Yeah. (4:30) It's self published, and it'll be coming out formally at the end of this month. (4:34) So we'll do a launch then, and we'll I'll let you know, when that happens. (4:39) But, you know, we we talk to a lot of people who who have kids and always say, oh, I would love to do something like that, but we just never quite got around to it. (4:49) And what really stuck with me was one one surgeon that I talked to said, you know, we always said, oh, that would be so great. (4:56) We should do that. (4:57) And then we just never did it, and now my kids are too old. (5:00) They're in college, and we're never gonna get the chance. (5:03) And I just wanted to help people feel like it doesn't have to be as complicated as it as it might seem. (5:10) This is something you can do. (5:11) It's totally manageable. (5:12) You're a parent. (5:13) You do complicated things all the time. (5:15) This is something you can figure out and not and help people not be left with that kind of sense of, oh, shoulda, woulda, coulda. (5:22) And then at some point, it is. (5:24) You know, your kids are old. (5:25) They have their own lives, their own things that are happening. (5:27) And if there is a window that you can do this kind of travel and these kinds of experiences and adventures with your kids more easily.

Scott Benner So And even though Sarah doesn't know it because she's too busy to listen to my silly podcast, her episode is 1,617. (5:41) It's called 50 countries with diabetes.

Sarah Wonderful. (5:44) I will check it out.

Scott Benner Yeah. (5:46) I love that you didn't listen to it. (5:47) Alright.

Sarah I'm sorry. (5:49) I I honestly, I usually run-in silence. (5:52) I almost never listen to podcasts these days. (5:54) I, do listen to Buddhist meditation while I run. (5:58) That's

Scott Benner okay. (5:58) Hey. (5:58) Listen. (5:59) Don't give those peep the people listening that idea. (6:01) You you

Sarah have I'm sorry.

Scott Benner No. (6:02) You have to be listening to podcasts when you're doing stuff. (6:04) You can't be in silence. (6:06) No. (6:06) Never silence.

Sarah Right. (6:08) But

Getting into AI and Neural Networks

Scott Benner when when you and I were talking last time, it kind of came up that you had an understanding about how AI was working. (6:16) And so why don't you explain to people first, like, how it is you have that understanding, and then we're gonna move forward and talk about some things specific to AI and diabetes.

Sarah Yeah. (6:26) So, actually, during one of our big family trips, it was the first time that I hadn't worked full time since I was, you know, or gone be you know, been in school since I was, you know, tiny. (6:38) And I really got interested in AI and just mostly how you can teach a computer how to understand language. (6:44) I just found that really fascinating. (6:46) And this was kinda in 2022 before the big leap with ChatGPT and and and the neural networks really started. (6:54) So I taught myself all about it, everything I could. (6:57) I watched videos because I'm a huge nerd, from Stanford and MIT and read computer science textbooks. (7:03) I already knew how to code from some previous work I'd done. (7:06) And then just talk started talking to people about what are they doing and what are they interested in and then started, writing a a substack on health care AI. (7:14) And, since then, have written that steadily and formed a group of physicians interested in health care AI. (7:20) And and then a few years ago, started working at Rand, which is a large think tank in The US doing AI model evaluations for national security risk. (7:28) So really trying to look at, you know, how would we know if some of these frontier models created new kinds of risks for biosecurity? (7:36) And if they do create them, what kind of mitigations different kind of mitigations might be needed to help decrease those risks? (7:43) So and then transitioned to doing more of a health care focused AI evaluation and governance role in a new company that I started, at the beginning of this of last year.

Scott Benner So I help hospitals and hospitals. (7:56) An anesthesiologist too. (7:57) Right?

Sarah Yes. (7:58) While I'm traveling

Scott Benner And traveling all the time.

Sarah Yeah. (8:02) I like to I I like variety, it turns out.

Scott Benner I think I'm it's possible I'm the only podcast host who sits holding in a laugh while someone's explaining something that impressive because I just wanna laugh. (8:14) I'll be like, why are how are you doing all of this? (8:16) I'm just like, I'm still stunned by we're gonna get past that part because I wanna get to the AI thing. (8:21) I like how you went from, like, I found it interesting how you could teach a computer to blah blah blah. (8:26) And then you were like, and then I did this and that and started a business, and I worked for the government. (8:29) I don't I'm like, holy you feel like a spy. (8:31) You're not a spy. (8:32) Right, Sarah?

Sarah I am not a spy. (8:34) Although sometimes when we traveled to, you know, very far flung pit places, I got a little nervous when, you know, they were, know, looking through my laptop and such just because, you know, you don't want people to get the wrong impression of what is actually happening.

Scott Benner Oh my gosh.

Sarah Well, you you

Scott Benner I love you. (8:52) I swear. (8:53) I I think I think you'd be disgusted with me inside of thirty five minutes if we were in the same room together, but I think you're fantastic. (8:59) So so explain this to me. (9:01) You sent me a little list that I'm thrilled to have gotten, for our conversation today, and you kinda broke it down to bullets. (9:06) I wanna just follow your bullet points. (9:08) Like Okay. (9:09) So let's lay it out for people and explain to them where it's already being used and how it might be used in the future. (9:15) I'm gonna talk a little bit about how I use it interspersed in inside of the conversation. (9:20) But I think mainly, the general public has, as far as I can tell, either a really, like, kinda harsh reaction to the words, you know, when somebody says artificial intelligence or they're just, like, too Pollyanna about it when they talk about it. (9:37) I don't really hear anybody talk about it, I think, thoughtfully in common conversation is my point. (9:43) Does that make sense?

Sarah Totally. (9:46) Yeah. (9:46) And I think I think there are a lot of reasons for that. (9:50) And one of them is that I don't think the AI community has done a good job of explaining what AI is because we all have been using AI for a long time.

Scott Benner Mhmm.

Sarah AI is in everything now, but for diabetes, for example, it has been for a long time anyways. (10:07) AI is kind of a a big circle, and and within that is a a small circle, which machine learning is in there too. (10:16) Machine learning is old, and that's, you know, kind of looking at data and predicting patterns and that kind of technology. (10:25) That is actually encompassed within the the umbrella of AI, generally. (10:29) Mhmm. (10:30) What is new is neural networks. (10:32) And those most ML, to find a pattern, you would kinda say, these are the things that we think AI, these are the things that we think might predict a pattern. (10:42) So, you know, kinda look in the data, say, okay. (10:45) This seems more related. (10:46) This seems less related. (10:48) This is how we can kinda group these things together. (10:51) What neural networks can do and what's really exciting is it can look at a huge, huge amount of data and find patterns and synthesize that information. (11:01) And it doesn't have to be told this is where the connections might be. (11:05) It can find those connections on its own. (11:07) And so, you know, it is superhuman in that way. (11:10) And I think there's this, you know, there's this tension between as we know, AI is great at some things and terrible at other things. (11:19) So AI is already superhuman at doing a lot of things, like finding patterns, as I mentioned. (11:24) But, also, when I was an undergrad, I did chemistry research on protein folding. (11:30) And it would take months and months to figure out how to how a protein actually folded in real life. (11:37) And this was a this was a huge task. (11:40) And AI now, with the help of John Jumper, who won the Nobel Prize a few years ago in chemistry, and the team at Google DeepMind found a way to figure that out within minutes. (11:51) So that used to be this huge problem in chemistry, and now it's not a problem. (11:55) Now it's completely defined. (11:56) It's completely solved. (11:57) I mean, obviously, there are a few outliers, but that kind of ability, I think, makes people both excited and nervous. (12:06) But then in other ways, you know, until very recently with AI, couldn't the chatbots were not able to count the number of r's in Strawberry. (12:15) People might have might have seen some of some of those online as well. (12:19) You know, it just if you asked it how many r strawberry had in it, it would just get a wrong wrong answer over and over and over. (12:26) So and it's still you know, to me, it still can't book a flight. (12:30) So, you know, if it can't book a flight, it's, you know, it's not that superhuman.

Scott Benner Is it not gonna pivot again though? (12:38) Because that I listen. (12:39) I'm gonna say a lot of names. (12:40) I don't I don't know this guy's name. (12:42) So there's a guy who's been huge in this space, took a break, came back, and then coded a a claw bot or something like or claw bot or something, and then didn't chat GPG just hire him? (12:53) Like, it aren't we getting towards agents that work for you?

Midroll Sponsors: Cozy Earth & US Med

Scott Benner Friends, I just placed my order at cozyearth.com. (13:01) They're today's sponsor, and I'm here to tell you about them. (13:04) Use my offer code juice box at checkout when you buy, and you'll save 20% off of your entire order. (13:10) That's everything in your cart at cozyearth.com. (13:12) Save 20% with the offer code juice box. (13:16) Now why am I excited? (13:17) Well, I just ordered the Cozy Earth blanket. (13:21) It's the viscose bamboo blanket. (13:23) I'm super excited about it. (13:25) It looks comfy as can be, and it's gonna go so well with the sheets that we already have from Cozy Earth. (13:30) Now, yeah, I'm a bit of a a Cozy Earth convert, I guess. (13:34) I'm sitting here in my joggers. (13:36) I used my towels coming out of the shower this morning. (13:39) I slept on my sheets last night. (13:40) Slept like a baby, by the way. (13:42) Cozyearth.com. (13:44) They pretty much have everything you want. (13:46) Use the offer code juice box to save 20% at checkout on skin care, women's and men's clothing, bath and sleeping accessories. (13:55) And don't forget, Valentine's Day is coming up quickly. (13:58) Get those pajamas. (13:59) Cozyearth.com. (14:00) Use the offer code juice box at checkout to save 20% off of your entire order.

Scott Benner I used to hate ordering my daughter's diabetes supplies. (14:10) I never had a good experience, and it was frustrating. (14:13) But it hasn't been that way for a while, actually, for about three years now because that's how long we've been using US Med. (14:21) Usmed.com/juicebox or call (888) 721-1514. (14:30) US Med is the number one distributor for Freestyle Libre systems nationwide. (14:35) They are the number one specialty distributor for Omnipod Dash, the number one fastest growing tandem distributor nationwide, the number one rated distributor in Dexcom customer satisfaction surveys. (14:47) They have served over 1,000,000 people with diabetes since 1996, and they always provide ninety days worth of supplies and fast and free shipping. (14:57) US Med carries everything from insulin pumps and diabetes testing supplies to the latest CGMs, like the Libre three and Dexcom g seven. (15:07) They accept Medicare nationwide and over 800 private insurers. (15:12) Find out why US Med has an a plus rating with the Better Business Bureau at usmed.com/juicebox, or just call them at (888) 721-1514. (15:24) Get started right now, and you'll be getting your supplies the same way we do.

AI Agents and Transforming Workflows

Sarah Yes. (15:29) We're getting a lot closer. (15:31) So the technology is improving so quickly, and that's one of the issues that I think is important to talk about today too is what is out there and what can be done is not the same as what's being done in the health care field. (15:46) Because health care is so understandably conservative and risk averse, a lot of what is possible is not takes years to translate that just because of the systems we have with the FDA and devices and and all those concerns, which are there for a very good reason. (16:04) It is making it hard for them to regulate AI in a meaningful way. (16:08) As you mentioned, there's agents are the future. (16:11) Already, most of the AI that you use is an agent. (16:15) Yeah. (16:15) Meaning that it has and do you think people should I define the term agent?

Scott Benner Go ahead. (16:20) Yeah, please.

Sarah Okay. (16:21) So an agent is basically a brain. (16:23) So think of it as a a little brain who has that has access to different tools. (16:28) And tools might be something like the Internet. (16:31) The Internet could be a tool. (16:33) The instructions on how to create a PowerPoint might be a tool. (16:36) Instructions on how to book a flight in the future might be a tool. (16:40) So it has kind of all these different contexts and tools that it has access to. (16:46) So it can decide for any given question or task, which of these tools should I use to do that either alone or together? (16:56) And then how can I put those together to make a good output?

Scott Benner Mhmm.

Sarah So when people talk about agents, it's really it's often a compilation of different AI tools that are being controlled by a central AI tool.

Scott Benner Okay.

Sarah Does that make sense?

Scott Benner It does. (17:12) Actually, I'm I guess, somewhat unironically, I have an agent scraping a Facebook post for me right now. (17:20) Mhmm. (17:20) Like, so I put up questions to explain to people what one of the ways I use it. (17:26) I will put up a question that I'm trying to crowdsource how everybody feels about something. (17:31) Been doing this for years and years and years. (17:33) Right? (17:33) What are your I have a an exhaustive list, for example, of, like, what people's struggles are with type one diabetes. (17:39) We created the entire grand round series off of a 90 page document that asked people the question simply, what do you wish someone would have said to you at diagnosis? (17:48) What do you wish someone would not have said to you at diagnosis? (17:51) And

Sarah Wow.

Scott Benner We used to just put up the post and then get all the responses back, and then Isabelle would take all of those responses, read through them, collate them, say, oh, this one and this one are the same. (18:04) She'd kinda put them together. (18:06) She did that all for me in the background. (18:08) Now I send an agent to a post. (18:10) It scrapes it out, and then I have it do that. (18:13) It takes about, like, ten minutes maybe.

Sarah And Isn't it amazing?

Scott Benner Yeah. (18:18) Yeah. (18:19) No. (18:19) It's it's absolutely fantastic. (18:21) And I'm 54. (18:23) I don't know how old you are. (18:24) I'm sorry.

Sarah Forties. (18:25) Yeah.

Scott Benner Oh, you're in your forties. (18:26) Okay. (18:27) When I look at computers right now and I look at all this, I go, this is what was promised to me when I was a kid. (18:32) And Right. (18:33) So when I look up and I see people scared about it, I'm like, alright. (18:36) I get that everybody thinks the Terminator's gonna come and, like, step on your skull and everything, like and and that might and all I could say to that is is, like, maybe, but we could get there in a lot of different ways. (18:46) If we can get through this and make it work for people, I think it's gonna be magical in what it does. (18:53) Like, is it gonna change the job market? (18:54) I'm sure it will. (18:55) Like, I mean, because listen. (18:57) I don't really talk about it a lot, but this podcast is huge. (19:02) I run it completely by myself.

Sarah That's amazing.

Scott Benner I don't have a marketing team. (19:07) I don't have a writer. (19:08) I don't the Rob edits the audio. (19:10) But, I mean, like, the rest of it, like and I used to do that too, by the way. (19:13) It's just I didn't sleep much. (19:15) So and so, like, you know, all the things that I accomplish in the course of a day are are weeks' worth of work. (19:24) Or you say, well, you could have hired somebody, but no. (19:26) I could not have hired somebody. (19:28) I don't have that kind of money. (19:29) I couldn't have done that. (19:29) So it just would not have gotten done. (19:32) And it's I don't know. (19:33) It's it's just really fantastic. (19:35) And when people think about it in their diabetes technology you said something that I I meant to get back to. (19:40) I'm sorry. (19:41) I'm pivoting. (19:41) But you were like, health care is risk averse, but there's something specific about it. (19:47) Right? (19:48) Like, I forget I'm I'm a little messed up here because I don't have all my words that I need. (19:52) But in health care right now, give people examples of where AI is being used right now in their diabetes technology, then I'll ask my question. (20:00) I'm sorry. (20:01) Go ahead.

Sarah Well, first, I wanna say how amazing it is you're able to do all of this on your own. (20:07) I can't imagine how much work that is.

Scott Benner It's just every moment I'm awake. (20:12) That's all. (20:12) And

Sarah Well, that's all it is. (20:15) It's all your time. (20:16) And, and that is I mean, I I hope that as the agents get better and you can offload even more work to them, you know, which I find myself doing, you know, every few months, there there seems to be a meaningful improvement in in what the agents can do. (20:33) And I find myself offloading more work to them on a regular basis. (20:39) So I

Scott Benner My goal is to have an agent who's thinking about the podcast the way I am right now and telling other agents what to do.

Sarah Yeah. (20:46) I think that is actually possible now.

Scott Benner Yeah. (20:48) But that's that's where I'd like to be because I have a plan. (20:51) I know how I run my day and my week. (20:53) If something else was, like, overseeing that, that would be a big deal. (20:57) Then I could actually sit down and, like, you know, read my emails not once a month or once every two months. (21:03) I could actually do it, you know, every couple of days and have, like I could do more human things, I guess, is what I'm talking about.

Sarah Exactly. (21:10) And I think that's the promise. (21:11) I think that we are all so used to, you know, the minutiae of using computers. (21:19) You know, computers were supposed to speed us up, and I think, you know, a lot of times we ended up adapting to the computers instead of the computers truly adapting to us.

Scott Benner Oh, a 100%. (21:28) Computers just cause different busy work.

Sarah Yes. (21:30) That's it. (21:31) Yeah. (21:32) I mean, how many, I mean, I've created many, many PowerPoint presentations in my life. (21:37) Moving a text square from one side of this of a, you know, PowerPoint to the other side, is just not a meaningful use of my time in any situation. (21:47) And the fact that now AI can produce beautiful PowerPoints in, you know, thirty seconds that are are very nice and actually make sense, I mean, to me, that's a meaningful improvement.

Scott Benner Sarah, I recoded my entire website over the weekend. (22:02) Yes. (22:02) I don't know anything about coding.

Sarah Right.

Scott Benner Yeah. (22:06) My website is so much better than it was on Friday. (22:11) I completely changed the search. (22:14) Like, right now on the front page, there's the last four episodes of the podcaster right in front of you. (22:19) You can arrow through and go back, I think, through, like, the last 30. (22:22) You wanna listen online, most people don't listen online. (22:25) There's a search audio. (22:26) If I just type in 1617, your episode is in front of me now. (22:30) I can click on it.

Sarah I see.

Scott Benner Go listen to it in Apple or in Spotify or, you know, right here. (22:35) If I wanted to type it into a different search box, 1617, now it searches the website. (22:40) It takes me right to the web page that I created for your episode. (22:45) There's now a beautiful menu on the side that lists out the guides and the estimators, the series, the collections, different links in the site. (22:53) Like, I completely remade juiceboxdocs.com, which is a website website where you guys can send in, like, great doctors that you use. (23:01) It's now searchable. (23:03) It now tells you if the if the doctor has type one. (23:06) You can search by that. (23:08) You can submit your own doctor, which used to go into my inbox. (23:11) Then I had to sit down and then go in and make a text box on the web page and recreate that. (23:16) Now it goes into a, Google Doc somewhere where someone looks over it with human eyes and then slides it into the other page of the Google Doc and it appears on the website. (23:26) Right?

Sarah It's amazing. (23:27) Right.

Scott Benner Not only that, but you can click on a phone number when you're in there, call the doctor, go to their website, launch a Google Map for it. (23:35) Have you ever heard me talk on the podcast about, I don't really understand what the podcast does for people? (23:41) I make it, and I know it helps them because they tell me, but I'm trying to figure out functionally what does that mean. (23:48) Like, if I if I told you to sit down and be me, what is it I'm doing? (23:52) I know that maybe is sort of existential, but I realized I was never going to figure it out exactly. (23:59) So I just loaded in all of my transcripts, and I asked AI, and it explained to me why people interact well with me.

Scott Benner The Kontoor Next Gen blood glucose meter is sponsoring this episode of the Juice Box podcast, and it's entirely possible that it is less expensive in cash than you're paying right now for your meter through your insurance company. (24:22) That's right. (24:22) If you go to my link, contournext.com/juicebox, you're gonna find links to Walmart, Amazon, Walgreens, CVS, Rite Aid, Kroger, and Meijer. (24:34) You could be paying more right now through your insurance for your test strips and meter than you would pay through MyLink for the Contour Next Gen and Contour Next test strips in cash. (24:46) What am I saying? (24:47) MyLink may be cheaper out of your pocket than you're paying right now even with your insurance. (24:54) And I don't know what meter you have right now. (24:57) I can't say that. (24:58) But what I can say for sure is that the Kontoor Next Gen meter is accurate. (25:02) It is reliable, and it is the meter that we've been using for years. (25:06) Contournext.com/juicebox. (25:10) And if you already have a contour meter and you're buying test strips, doing so through the juice box podcast link will help to support the show.

Sarah How? (25:19) What did it say? (25:19) I'm so curious. (25:20) I mean, I have some ideas, but I I'm curious what the AI thought.

Scott Benner I'll pull that up, and we can talk about it at the end. (25:24) Okay? (25:25) Okay. (25:25) Okay.

Sarah Great. (25:26) And and then I just wanted to pull out one other thing that you said. (25:29) You said it allows you to do human things. (25:31) It allows you to do human things, and it does things that wouldn't have been able to do otherwise.

Scott Benner Absolutely.

Digital Twins and the Future of Medicine

Sarah And I think that is really what we're trying to get to with AI, and I think it really directly applies to a lot of the diabetes pieces as well. (25:44) Because, really, what we are trying to do, and think what we're moving towards with diabetes, is that we're able to analyze data in a way we never were before. (25:54) We're able to, do precision medicine and individualized medicine in a way that was never possible previously. (26:02) And then we're able to figure out how well things work in a way that no human would have been able to.

Scott Benner Mhmm.

Sarah So I think that's the that's the hope. (26:13) So kind of big picture, what AI is doing now, you know, I think we all know that, you know, the closed loop predictions, the predictive technology, that's all AI, technically. (26:27) It is older AI for the most part. (26:31) It's mostly mostly ML, which is the older kind of AI technology. (26:36) We are going to see more personalization, more things like exercise prediction, better dosing. (26:43) And then pretty soon, we're gonna start seeing digital twins, and AI that can, really be more close to you. (26:52) And then I think also looking at, larger population health and trying to figure out better ways to predict diabetes as a whole and predict things that influence care and improve care.

Scott Benner So Talk a little more about what digital twins means.

Sarah Yeah. (27:09) Great question. (27:10) It sounds really bizarre and scary, I think. (27:15) But what it really is is it's a digital version of all the data we have about you. (27:24) So that would be things like, you know, diabetics have so much data about them that most people don't have. (27:32) You know, that just even if you only look at the glucose monitors, you know, you can you can guess at what was happening during multiple points of the day. (27:40) And then if you add, you know, test results and other pieces of data in there, you basically have a version of yourself that is just a whole bunch of data, and that is a digital twin. (27:55) The advantage of that is you, maybe now, but in the future, the thought is that you can try stuff on the digital twin before you try stuff on the real person. (28:07) And that, hopefully, the digital twin has enough data to be a realistic representation of you and how your body will respond. (28:14) And that way, you know, really, what we've been doing for a long time is more or less experimenting and being like, well, here's I mean, as a doctor, I could say this. (28:23) We give somebody medicine. (28:24) We say, alright. (28:26) Well, you know, it works for a lot of people. (28:28) It doesn't work for some people. (28:29) You know? (28:30) I hope it works for you. (28:31) And the hope is that with more digital twins and more data about people, we'll be able to make much better predictions about what kind of treatment, what kind of therapies will be most efficacious for different subsets of people and even for different specific people, which really is the change. (28:48) K. (28:49) Already, I'm getting alerts in my my medical inbox when I prescribe something saying this person has, has had testing for a specific enzyme, for example, that speeds up the metabolism of certain kinds of medicines. (29:02) And so if you prescribe this, you know, either you want to avoid prescribing it depending on what the medicine is, or if you prescribe it, it may not work as well, or it may take longer to get out of the system. (29:14) So already, we're seeing a little bit of that, but that's just one data point, really. (29:18) That's just that one lab test. (29:20) What I'm talking about is having the whole set of all the data points of you and being able to test things on you before it actually gets to the person themselves to make sure it actually will work.

AI for Diabetes Calculators & FDA Regulation

Scott Benner Because we have all the data already. (29:32) It just doesn't That's it. (29:33) It doesn't do anything. (29:34) See, I'm I'm overwhelmed with that idea right now that I've recorded 1,800 plus episodes and that if you kind of colloquially talk about it, people say, oh, I listen to the podcast and my a one c goes down, which means that the answers to your issue are in there somewhere. (29:52) And so if they're in there, but they have to come out conversationally, isn't there a way to, like, pick through them and distill it even more? (30:00) Right? (30:00) Like, so the podcast is great for people who enjoy conversationally listening to something that they want long form talking. (30:07) Right? (30:08) But some people just don't want that. (30:10) And some people will tell me I've listened over and over again, and and nothing's happened to my a one c.

Sarah Interesting.

Scott Benner They just don't learn the same way. (30:17) Right. (30:17) So when you know it feels like there's a big dark room and all the answers are in it, but I don't have a light. (30:22) I can't turn it on. (30:23) And even if I could turn it on, what I would find is millions and millions and tens of millions of words that have to be gone through to figure out what is valuable and, you know, and what isn't. (30:34) And and I've been thinking about that for years, and now all of a sudden, it's like, it's right here. (30:39) I tried to service the other day. (30:40) I don't think it's quite ready for prime time where you load all of them in. (30:43) You can just talk to just, like, you basically create a large language model of just the transcripts. (30:49) Mhmm. (30:49) So it's only going to that. (30:51) It's close. (30:52) It didn't do a bad job, but the engine was like GPT four, and it just wasn't quite right.

Sarah Right. (30:57) Yeah.

Scott Benner I thought, okay. (30:58) This company, like, if they keep doing this, hopefully, they'll stay in business or somebody else will figure it out. (31:03) And maybe, you know, a couple of years later. (31:05) But then you immediately run into the problem of you have to give somebody a prompt, and then they have to ask it the right question to get the answer out of it, which is unlikely. (31:14) Like, that's probably not going to happen. (31:16) Right. (31:17) So then the the model needs to be able to already know your questions even if you don't know them so that it can serve you the information. (31:25) But I'm telling you that before I'm done, there is gonna be a prompt. (31:28) Juiceboxpodcast.com is gonna be a prompt when I leave. (31:31) It's gonna have questions that are that you don't even know to ask. (31:35) You're gonna click on them, and it's gonna tell you the answers. (31:38) And that's gonna be that. (31:39) But the problem becomes there is, like, what if someone types into the prompt, my insulin to carb ratio is this, my sensitivity is that, I'm about to eat 50 carbs, blah blah blah. (31:52) What's the what should I bolus? (31:54) There's enough conversation inside of the podcast to answer that question.

Sarah Right.

Scott Benner And then that becomes a class two medical device.

Sarah Well, the FDA just came. (32:05) The f FDA just, loosened the rules.

Scott Benner You thought they

Sarah loosened them? (32:09) Well, they they said they weren't gonna take any action with with Chad or Claude on health care advice.

Scott Benner Perfect. (32:19) Because I have a an estimator. (32:22) I have to call it an estimator on my website where you put in just your weight, and it gives you starting settings for everything. (32:31) Wow. (32:32) Because what I figured out one day is I was watching I I went into the office. (32:37) I was talking to the practitioner, and I said, you know, I think Arden settings are messed up. (32:41) Like, I don't really you know, I'm not I'm not sure, like, kind of, like, where to, like, reset them spec way before I knew what I was doing. (32:47) And she just said, how much does she weigh? (32:49) And I told her, and then she pulls out a piece of paper, and she's writing, and she's scribbling and scribbling and writing and talking and blah blah blah. (32:56) And then I you know, over conversations with Jenny, I realized that there's you know, there are prescribed calculations they do off of your weight to give you starting settings for everything, carb ratio, basal sensitivity, the whole thing.

Sarah Oh, wow.

Scott Benner And so I was like, oh, okay. (33:12) So I'll, like, find out what that math is, and then I'll just put it all together in one place. (33:18) And I put it together, and I was like, okay. (33:21) Now this is a tool. (33:22) I can't put a tool up there. (33:23) You can't type your weight in because then it's a diagnostic tool. (33:28) But if I put a slider up there and call it an educational tool and you get to pick a weight just to see what happens to the settings, it's not my fault if you pick your own weight. (33:37) But that kind of stuff is ridiculous. (33:40) Like, you go do you know what I mean? (33:41) Like, because everyone should have access to being able to reimagine their settings like that. (33:48) That shouldn't be a big deal, I don't think. (33:50) They it doesn't say that the settings are perfect. (33:52) If your basal set at 1.5 an hour because you don't bolus for your food well and your doctor just keeps pushing your basil up and over basils you, and you could, like, learn one day, like, oh gosh. (34:04) You know what? (34:05) It seems like my basil should be more like one an hour. (34:08) And my carb ratio is wait. (34:10) One unit covers 15. (34:12) I've had it as one unit covers, you know, the wrong thing the whole time. (34:16) Like, it would give you a place to kinda start over again. (34:20) And I just think that that if you could then spend a little time getting your settings together, then go to the other estimator where you can put your settings in, the carbs, the fat, the protein of what you're eating, and it breaks out exactly how a bolus would look. (34:33) Is that not what we want for people? (34:35) Like, do you know what I mean? (34:36) Like, that's so I'm glad to hear that you feel like they loosened it up because

Sarah Yeah. (34:41) Yeah. (34:42) They basically said and what a first of all, what an amazing tool, and I can't imagine how helpful that is and will be for for so many people. (34:51) I mean, just having these kind of resources

Scott Benner Yeah.

Sarah That are on your website, especially you know, I remember, you it wasn't so long ago, I guess, four four or five years ago that I was just starting out as a a type one diabetes mom. (35:03) And even with all the medical education and knowledge that I had, it was still completely overwhelming to figure out, you know, what you should be dosing for different at different levels. (35:12) And I definitely relied on your podcast. (35:14) I definitely I definitely was a person who learned, from your conversational approach and appreciated it. (35:21) But I think the more, like you said, the more tools and the more ways you allow people to interact with the information, the the better experience people are going to be able to have for themselves. (35:33) And at some point, they might have their own agent who knows them and knows their personality and knows their you know, where they usually struggle, might even be able to just engage on its own with your with your tool and then bring that information back to them without them having to search it out because that because their own chatbot will, will proactively know, oh, look. (35:54) Hey. (35:54) There's this problem coming up. (35:56) I'm gonna bring this information to the person.

Scott Benner Yeah. (35:59) Or or leave me out of it. (36:00) I basically just vibe coded it, and I just I just vibe explained it to you. (36:04) At this point, you could go to a window and say, what are all the implications that, you know, are taken, you know, into account when I'm bolusing for food? (36:13) It'll just tell you. (36:14) ChatGPT will tell you about the Warsaw method. (36:17) It doesn't need me to tell you about it anymore. (36:19) To make your point about the agent and the food, like, if you know your since your sensitivity and your carb ratio, and that's pretty much what you need to know. (36:28) So if you know your sensitivity, your carb ratio, and the impacts of what's coming from the food, fat, protein, carbohydrates. (36:37) Right? (36:38) Boom. (36:38) Here's the you know, it's a 4.6 unit bolus, and then you need another 1.6 units over three hours to cover the fat. (36:46) Like, something like that. (36:47) Right? (36:48) And then you said to it, well, you know what? (36:51) This is a I don't know. (36:52) Is this a cheeseburger happy meal? (36:54) Remember that. (36:55) Remember that I'm having a cheeseburger that these are the the carbs, the protein, the fat for a cheeseburger happy meal. (37:00) And by the way, here it is when I do it with a milkshake. (37:03) And then just build a library behind your you could have an app on your phone in two seconds that you could literally just pull it up and hit a search bar and type in cheeseburger happy meal, and it'll tell you how to bolus.

Sarah Exactly.

Scott Benner Based on your settings.

Sarah Based on you.

Using AI to Build Custom Tools

Scott Benner And so yeah. (37:20) Right. (37:20) And so, like, that's that's not just, like, futuristic, but I'm telling you that me sitting here right now, I think I could build that app.

Sarah Oh, you definitely could. (37:30) Yeah. (37:30) I mean, my my kids have been experimenting with with all the tools and building apps pretty frequently. (37:37) I mean, it it's amazing how how much it's democratized, the ability to create a website and an app and and different tools. (37:46) Like and because these are tools, and these are tools for people to use. (37:49) And, again, they're for the people, but there have been always been people with great ideas who just didn't have access to a programmer and resources and money to build the thing that would actually help them and help other people.

Scott Benner So Yeah.

Sarah I think it's amazing that It's common. (38:03) Has really been, made available to to everyone now.

Scott Benner I swear to you on Sunday afternoon, I hate the menu at the top of my website, and I finally just was like I went to let me start by saying, I'm not using the free version of one of these things. (38:17) Okay? (38:17) So I'm check I'm paying a fair you know, it's some of them are, you know, couple $100 a month. (38:22) But you get deep research, you get unlimited, you know, tokens, like, you can you pretty much go as much as you want. (38:28) I've been doing a lot of it in Gemini.

Sarah Mhmm.

Scott Benner And that's been working really well for me. (38:32) But I'm talking about Gemini Pro, their Ultra plan. (38:36) Like, I think it's, like, $250 a month or something like that. (38:39) Don't just go to the window, like, to the free version and be like, tell me how to take because it's gonna make more mistakes. (38:44) Right? (38:44) So, anyway, I I go to the window and I just say, look. (38:48) Go to juiceboxpodcast.com and look at the menu at the top of the page. (38:53) And then it comes back and I go, I hate that menu. (38:56) Can you write a better one? (38:58) And it just did. (39:00) Yeah. (39:00) And that and that's it. (39:02) And now I and then I looked at it and I went, oh, I don't like that. (39:04) I'm like, put this here. (39:05) Can we put on the right side of the page? (39:07) Can we do this? (39:08) You know, when you mouse over something, I'd like it to light up a little bit. (39:11) And then it it was a little, like, too much. (39:13) I was like, not that much. (39:14) And then it dialed back a little bit. (39:16) And then I was like, here's all the links I want to be in there. (39:19) Put them in there. (39:19) Make them alphabetical. (39:21) Except I want the pro tips, the bowl beginning, and this one to be the top three, and then it can be alphabetical. (39:25) There's no coding involved in it. (39:27) Like, I just literally spoke to it what I wanted to happen. (39:30) It made me feel like the typing was slowing me down, and I should get a headset.

Sarah You probably should. (39:36) Okay. (39:36) Those work quite well with these these AI tools now. (39:40) But I think it what what's interesting to me is that now that's what the coders will say too. (39:44) You know, engineering used to be coders you know, people think of the hackers, you know, typing away with you know, there are lot of semicolons and the, you know, the, the whole screen and everything. (39:56) And now they're even they are doing a lot more conversational coding. (40:00) I mean, obviously, it's easier for them to see the whole architecture, and that's, you know, I think, just like with any

Scott Benner skill that value still is. (40:07) Yeah.

Sarah So, yeah, I think when people talk about coder think about coders, they think about, you know, people actually typing code. (40:13) And I think if you talk to programmers now, you'll find that the job even that job itself has changed so much. (40:19) And now a lot of them are interacting in a mostly natural language way with tools that help them you know, they say some similar to what you are saying. (40:29) You know? (40:29) You have this desire to do something different, and, you know, how can you change it for me? (40:34) What would the code look like? (40:35) It spits out the code. (40:36) Of course, they're able to change that code more easily and to to modify it in a more sophisticated way, but that's kind of how the the job is progressing very quickly. (40:47) And to me, that is interesting too. (40:49) Basically, they are the programmers are becoming supervisors for the agents that are going out there and coding things for them. (40:57) Mhmm. (40:57) And they're basically managing all these agents, you know, giving them context, giving them information like you would for any employee. (41:04) It's neat for them, I think, too because it used to take them days and hours and weeks and months to create one thing.

Scott Benner Yeah.

Sarah You know? (41:12) You're typing, typing, typing. (41:13) Finally, you get something that, like, kind of works, and then you would, you know, then you would debug it for another several months. (41:19) And now you can do that in, like you said, days, hours, minutes in some cases.

Scott Benner When I was, like, 12 or 13, I saved money for, like, two and a half years. (41:29) And I went to RadioShack, and I bought a computer. (41:32) And I went home, and I had this book of it just codes in it. (41:36) And I spent an entire day, like, typing the code from the book into the computer. (41:41) And I remember hitting the, you know, enter and it just failed. (41:45) Right. (41:45) And so I went back and spent hours reading through the book, and then I found the like, literally the one place I put a common in the wrong place or something

Sarah wrong. (41:53) Right?

Scott Benner And I hit enter, and a stick figure popped up on my television because the computer was attached to the television. (42:02) It did one jumping jack and it stopped.

Sarah Yes.

Scott Benner And I put that computer back in a box and returned it and got my money back. (42:09) And I was like I was like, this ain't ready for me yet. (42:12) And now today, I'm telling you, like, you have to kind of, like, listen to what I'm saying, listen to what Sarah's saying, but imagine it in the hands of the company making your insulin pump. (42:23) We already got to see it with Loop and Trio and all the the Android AP, like, all the, you know, the people online coding, like, you know, algorithms for insulin delivery. (42:32) If you really stop and listen to what's being said, what this stuff is good at as is pattern recognition. (42:38) Right? (42:39) Like, that kind of stuff. (42:40) Yep. (42:40) And forecasting glucose ahead, adjusting your basal insulin, like, delivering correction doses. (42:46) Like, this is all, like, kind of forecastable stuff. (42:49) And it's moved into all the other you know, all the pump companies have a version of it now.

Sarah Yeah. (42:55) Absolutely. (42:55) What

Scott Benner we're trying to wonder is is, like, will a company ever get to the point where they're gonna be comfortable making something that is so personal to you? (43:08) And then back to the thing that I thumpered through before that I couldn't really talk about because I I couldn't find the right words for is this stuff gets to the FDA because it's simple. (43:18) It's pattern recognition. (43:19) If this happens, then do that. (43:20) If this happens, then do this. (43:22) But it doesn't change because if it learned while it was going, then the FDA would need to approve the next thing it was going to do. (43:30) And that can't happen because that'll happen ad nauseam over and over again. (43:35) Like so that's where the rules have to catch up to the technology, and god knows how long that's gonna take. (43:41) Can you explain that better than I just did? (43:43) But you know what I mean. (43:44) Right?

Sarah No. (43:45) That was a I think that was a a great explanation. (43:48) Okay. (43:49) I I am hopeful that we will get there, and I think that is the future. (43:53) And I think everyone kinda realizes that that is where we need to get. (43:57) The FDA did make an allowance for AI to have some kind of planned updating, more or less, where you kind of say, when it gets this much information, it will do this kind of within a certain range. (44:11) So it's still bounded and not just like it's gonna, you know, kinda do whatever it feels like. (44:17) Mhmm. (44:18) So it they're kind of inching that way, but I think it's a real struggle to to move from devices that are meant to work the same in thousands or hundreds of thousands or millions of patients to devices that are meant to really work differently and possibly very differently in every single person and figuring out how to how to manage that in a way that is still safe with all the you know, humans are are often the the weak point in a lot of these technologies. (44:51) You know, we do things that we're not supposed to, or we drop them, or we, you know, you know, accidentally put an extra zero when we're typing something in. (45:00) And and so figuring out how to guard against some of the possibly very bad things, while still delivering the benefit is something that I think you're right. (45:11) The regulatory agencies, not just in medicine, but I think in all highly regulated industries, so things like defense, education, and those fields, everyone's really struggling with because it's such a different paradigm than than we've been used to.

Scott Benner It's different than how we think too.

Sarah Right.

Scott Benner Yeah. (45:28) Like, we think very literally as well. (45:31) Like and it's it's hard for people to jump ahead and have, like, fanciful ideas about what could happen. (45:38) Like, I'm I'm telling you, like, sitting here thinking, is it possible it's I could task something with understanding the value of how I conversate? (45:48) That's not a thing I was gonna get done otherwise. (45:50) I get people's reviews back. (45:51) Oh, Scott's, this or he's approachable. (45:54) Like, they use words like that, but there's actual reasons why it works. (45:58) And I don't know what it is because I'm not doing it on purpose. (46:02) And they don't know what it is because it's just working for them, and nobody's gonna spend the time figuring it out. (46:07) But if I could push a couple of buttons and come back a week later and read a report that explains a little bit about that, I don't know that it would do anything for me, but I don't know that it wouldn't do something for me. (46:17) Like, I just would like a deeper understanding of how it works. (46:20) And I wanna I guess I want a deeper understanding of what how conversation helps people or why it works for some people but not others. (46:28) Because then if I know why it works for some but not others, I might be able to find a way to make it work for somebody else that's not touched by it as well. (46:35) Or just to open up my own mind to understand because I don't think we're gonna get to what all this can actually do if somebody doesn't kinda run forward with their hands up and go like, hey. (46:45) What does this do? (46:46) I'm sitting here right now having the conversation with you for the first time thinking the DIY community for diabetes is amazing. (46:56) Like, each and every one of those people is wonderful. (46:59) Right? (46:59) Anybody who put time in a sitting down and banging out code to make loop or, you know, something similar to that, No one will ever be able to thank them, you know, well enough. (47:08) But is there going to be another generation of those people, or are some of those people gonna have their thoughts, like, reignited? (47:16) Like, are you gonna wake up a couple years from now while the industry is struggling to figure out what to do? (47:21) Like, is you know, are four guys, you know, connected in, you know, all over the world and and, you know, some wonderful lady who sits down and and and writes out the whole, like, instruction manual for how you put it together. (47:34) Like, are all those people gonna come back together again or reform, like, a a new version of the Justice League or whatever and make and make a version of this that just that you pick your phone up and go, hey. (47:45) I'm going to McDonald's, and I'm buying this. (47:48) And and is that it? (47:49) Like, do know what I mean? (47:50) Like, is that gonna because it's not not doable.

Sarah I I I'm so glad you mentioned that because I do think that the diabetes community is so lucky to have so many truly dedicated and interested, participants and people who are very active, who have a range of expertise. (48:08) That is really the best possible environment for AI because AI is so multidimensional and so multimodal. (48:15) You can get somewhere with a coder, but you can't get as far as you would get with if you had a coder who understands AI, and you also have people nearby or involved who understand some of the social aspects, some of the medical aspects, some of the

Scott Benner Yeah.

Sarah The user. (48:33) The hardware, the user aspects. (48:35) I mean, all these pieces to it, you're gonna create something much more meaningful and amazing, especially if all those people are able to use AI to speed them up and to refine their ideas and to to get better products out in the hands of people faster Mhmm. (48:51) Which is really what the industry is trying to do overall and get feedback more quickly. (48:56) You know, all this can just be sped up so much. (48:59) I do wanna say one thing with the evaluation of these models and why it is harder to do than it was in the traditional machine learning models. (49:09) And that is because AI is, by nature, probabilistic and not deterministic. (49:13) And what that means is it chooses the most likely answer from a range of answers. (49:20) It doesn't always give the same answer given the same information. (49:25) So because of that, it's hard to test if it's working because, you know, say say maybe, you know, even 17 out of 20 times, it'll give maybe not exactly the same, but a very similar answer, for example. (49:41) But then three of those 20 times, maybe it gives a very different answer or kind of a a strange answer that's not quite as understandable, or it's just off enough that you don't really feel like you can be like, yeah. (49:52) Yeah. (49:52) That's a good one. (49:53) Mhmm. (49:53) How do you trust that? (49:55) And how do you, say, well, it did a good job most of the time? (49:59) Is most of the time going to be sufficient for the users? (50:01) And then if you multiply that by the thousands and hundreds of thousands and millions of people who use these tools, you can see how those evaluation challenges, would be very difficult, and that's one of the main thing that things that regulatory bodies have really struggled with.

Scott Benner Well, I agree with you, but that shouldn't be the end of the conversation. (50:18) That's all.

Sarah No. (50:18) No.

Scott Benner That's all I'm saying. (50:19) Absolutely. (50:20) Yeah. (50:20) You don't hit a road bump and then go, oh, see, it's it you don't do what you see online. (50:23) Like, it's not always right. (50:24) I asked the same question three times. (50:26) It said three different things. (50:27) Well, okay. (50:27) Well, I guess this doesn't work No. (50:29) No. (50:29) No. (50:29) I have transcripts on my website. (50:31) Right? (50:31) This is not obviously delivering insulin, but I have transcripts on my website. (50:36) They're AI generated, but they're ugly. (50:38) And because they take up so much space, I have to put them behind kind of an accordion, like a collapse thing, which makes them not searchable by s for SEO, and and and that's problematic for me. (50:48) I would like my site to be I would like the transcripts to be searchable. (50:51) So I finally had time to sit down, and I said to my my prompt, I was like, here's my problem. (50:57) What can I do? (50:58) And it said, oh, you can give me the transcript, and I'll turn it into code. (51:01) You can put it into a code block, and then the code block can stay open partially, and you can click on it to open the full thing. (51:07) And that way, Google will be able to see it when it scrapes your site. (51:10) And I was like, oh, awesome. (51:11) Go ahead and do that. (51:12) So it it did that, and I was like, alright. (51:16) I wish it was a little more like this. (51:17) I wish you should pull out some key takeaways, put them at the top. (51:20) I want the formatting to be more like this. (51:22) I need it to be more readable that you know? (51:24) And then I got it exactly where I wanted it. (51:26) I was like, awesome. (51:28) Now I've been using AI long enough to know that if I just start dropping a new text file and then saying, do it again, do it again, do it again, by the third one, it's gonna mess it up somehow. (51:36) Yep. (51:36) So it gets to the third one and all of a sudden, it starts, like, leaving it says site start at, like, at every pop. (51:44) And I and I just go back and I'm like, do not put the site start language in the final product. (51:48) And it takes it all out, but then it gives you a, a bridged version of the transcript. (51:53) I said, took out site start, but then made the transcript a bridge. (51:57) I need you to rewrite this so that you don't do that again. (52:00) And so then it it does it, and I finally got it to a point where I realized that what I need to do is I I got it to write me a prompt. (52:07) I take the prompt, and I drop it into the window with a text box. (52:11) I hit return. (52:12) It gives me back code. (52:13) I drop the code in the code block, and then you get to see the transcript on the website. (52:17) It's very readable and lovely. (52:19) But what I need to do is every time I drop it in, I need to drop it in with a prompt. (52:22) I can't just say do the next one. (52:24) Do the next one. (52:24) To your point, like, I, for the life of me, don't understand why after the third or fourth one, just starts to mess up.

Sarah It loses context. (52:33) Yeah.

Scott Benner Yeah. (52:34) Yeah. (52:34) But it but it do you understand why that happens?

Sarah Yeah. (52:37) Yeah. (52:38) It loses it loses context, and it it so, basically, it it most easily sees the most recent things. (52:45) But if we think about our brains as having all these different memories in them and, that basically being kind of, like, employee handbooks that are within our brain for different things that we do or information we have. (52:57) The AI doesn't have nearly as much of that, especially for a specific task. (53:02) So it has kind of broader tasks like write a file or develop code. (53:06) But it for these very specific task, it doesn't have that kind of memory to draw from.

Scott Benner Mhmm.

Sarah The further away it gets from what it was asked to do initially, it just kind of

Scott Benner Starts to forget.

Sarah Edges more more or less. (53:19) But I also wanna say, you know, you really have gotten deep into using these, and I think it can be really intimidating for people to hear the words like, oh, it made a code block and those kinds of things. (53:31) And just wanna really emphasize, especially for this diabetes community that's so innovative and so so dedicated that it really just involves playing around with it for a while, and then you get this intuition like you have about, like, well, after the, you know, it takes a few times, and after that, it really goes off the rails. (53:48) But understanding the nuances and the complexities of it is really from just using it. (53:54) And you can take classes. (53:56) There's online classes for most of the major you know, frontier models have class on how to use the different tools and how to upskill in them. (54:03) But I my experience has been just using them on a regular basis for things you actually need to do and get taken care of

Scott Benner Yeah.

Sarah Is by far the best kind of learning. (54:11) And I think I would really just wanna leave your audience too with that message of this is something now that has has gotten to the point that normal people without any coding experience can use these tools and create really cool things, really cool things that previously would have needed a serious programmer to do.

Scott Benner If you don't like the way that sounds in your mind, think of it as construction. (54:33) Yeah. (54:34) Like, imagine if you had green lanterns ring, and you could just sit here and go, like, make wood, put it there, do this, make that taller, like, that kind of thing. (54:43) It feels like that to me. (54:45) And it's the obviously, it's all digital, but our lives are digital at this point. (54:49) So it's Right. (54:50) It's not a stick figure doing a jumping deck. (54:52) It's an actual thing that can impact, I don't know, your lives. (54:55) Like, I don't know if everybody will you know, can you use it around your home? (54:59) You know? (54:59) I don't know. (55:00) You could get it to you know? (55:02) And there's arguments, by the way. (55:03) Like, I I don't let it answer my email because I start thinking, like, well, if I let AI answer my email, then why are we emailing each other? (55:09) Aren't our AIs just talking to each other? (55:12) Right. (55:12) Yeah. (55:12) That that's not really, really valuable. (55:15) I answer my email all the time, like, by hand, by myself. (55:20) But Mhmm. (55:20) The other day, something happened online, and I needed to make a response to it. (55:25) And it was Sunday morning. (55:28) I guess I have a weird job. (55:29) Right? (55:29) Like, so people are kind of are talking about something, and I need to get involved, and I need to really, like, thoughtfully give my my ideas around it. (55:40) Okay? (55:40) Right. (55:41) So if that's gonna happen, then I gotta wrap my my mind around what's going on. (55:45) And then I've gotta read what people are saying. (55:47) Then I have to make sure I that I feel about it the way I think I feel about it. (55:51) Then I have to think about how to talk them about it. (55:53) Then I've gotta write it out. (55:54) Right. (55:54) Then I've gotta edit it and do it again and make sure it doesn't like, make sure it covers all the bases. (55:59) I'm not trying to be offensive. (56:00) Blah blah blah blah blah. (56:01) It takes me about like, when you see one of those posts from me online, you're like, oh, Scott's such a well thought out guy. (56:07) He must blah blah blah. (56:07) It took, like, three hours to do that because I'm also not a classically trained typist. (56:12) My brain's the right person for it, but the rest of me is not the right person for it. (56:17) I was able to explain to a window what the problem was and how I felt about it. (56:25) And then say, I wanna talk about this, and I wanna say this and this and this and this and this. (56:29) And it structured it for me in, like, no time. (56:32) And then I was able to read that back and go, that I don't agree with. (56:36) That is exactly what I meant there. (56:38) I would say this differently and then basically rewrite it. (56:42) And instead of me taking three hours, it took me forty five minutes. (56:47) And but moreover, what you don't know is that if it was gonna take me three hours, I just wouldn't have done

Sarah it. (56:53) Right.

Managing Diabetes Hype on Social Media

Scott Benner I I would have looked at it and said, okay. (56:56) I can't get that done today because it's Sunday morning and my family's getting up and we're doing stuff and I don't have time for this. (57:02) So that thing would have just sat there untouched. (57:05) Now that was just a Facebook, you know, conversation, but I thought it was a big deal. (57:10) And then after I read it, I thought, oh, I'm gonna make a podcast episode about this too. (57:16) And I think it's gonna help people. (57:17) And and to give it more context is very simply, don't I'm I'm not gonna get on a soapbox here and waste your time, but I at the moment, there's a lot of conversation around the Eladon trial out of Chicago and they Uh-huh. (57:30) You know, and people are getting islet cells put in their liver. (57:34) They're taking this new immune suppressant called Tego, and they're not having a lot of any side effects, most of them are saying. (57:41) And so, you know, there these people have a functional cure, and this you know, there's a this is a trial going on. (57:46) It's not FDA approved. (57:48) It's like, you know, it's a trial. (57:50) And, it's exciting. (57:51) But because of how social media is set up now, everybody online is like, they cured diabetes and blah blah blah. (57:57) Like and I don't like that. (57:59) I don't like giving people the idea that this it's almost over because it's not. (58:05) Even if they got through the FDA today, there's still a ton of reasons why it's not gonna get to all, like, 1,800,000 of you probably ever or, you know, cost or and I just think we should talk about that, like, adults not use it as fodder for Instagram and TikTok to get likes and posts and retweets and stuff like that. (58:23) I just kinda contextualized how long I've been in the space, that I don't like talking about it this way. (58:28) I do think it's very important to talk about. (58:30) I've got somebody coming on the podcast to explain their situation, but you need to understand. (58:34) Blah blah blah. (58:34) I gained some more thoughtful thing, and I just never would have done it. (58:39) I just I would have run out of time and not done it. (58:42) But instead, that post gets twenty, thirty thousand views. (58:46) People are, you know, hundreds of likes and hearts, and you make people feel com it's a good thing. (58:51) And then I'll probably sit down and do, a talking head episode about fifteen minutes long explaining this because, you know, I understand everybody trying to be an influencer nowadays, but, like, come on. (59:02) Like, don't jerk people around about them getting cured about their diabetes. (59:06) Like, it's it's okay to explain to them what's going on. (59:09) It's not okay to make it sound like it's imminent, in my opinion. (59:14) Like right? (59:14) So then that's gonna be my perspective on it. (59:16) And and but, anyway, without AI, like, I would not have had time to put my thoughts together and put them down like that.

Sarah I Well, it's so important to have first of all, I think, you know, to have a a seasoned voice out there and a voice of that can really like you said, context is so important both for people and for AI to understand what what's actually happening and and where where we actually are in this. (59:43) And like you said, I think it it really is about creating those opportunities to do something where nothing would have been done that are the biggest the biggest yield. (59:52) Mhmm. (59:52) And to have you talk about these issues and to give that kind of very reasoned and helpful picture to people, I mean, that's a huge benefit to to the conversation. (1:00:07) And kind of a little ironically, it actually you you having put that out there and then it being engaged with so many times, thousands of times. (1:00:18) Because these models are scraping from the Internet, that actually helps give the these foundation models better information over time and a more reasoned viewpoint just by you using AI to put your thoughts together more quickly and, put that viewpoint out there.

Scott Benner Yeah. (1:00:36) Well, maybe one day, it won't tell Mark Zuckerberg to value people arguing over people talking, and maybe then some of these posts will get seen by other people. (1:00:45) Right.

Sarah There is that.

Prompt Engineering and the AI Learning Curve

Scott Benner There is that. (1:00:49) So I'm not gonna tell you what it said, but I will tell you that my prompt for trying to figure out why the podcast is valuable to people says, you are analyzing a long form podcast transcript. (1:01:00) Your task is not to summarize. (1:01:02) Your task is to extract moments where Scott gives directive advice, expresses a belief about how diabetes should be managed, challenges a common mindset, reframes fear into agency, pushes back against con conventional thinking, describes what works or what doesn't work, return direct quoted statements, one to two sentences of context for each quote, Label each as tactical instruction, mindset principle, philosophical belief, behavioral pattern. (1:01:29) Do not invent ideas. (1:01:30) Only extract what is clearly present in the transcript. (1:01:33) Ignore guest only statements unless Scott affirms or reinforces them. (1:01:38) But I didn't write that prompt. (1:01:42) AI wrote that prompt with me explaining to it what I wanted it to do.

Sarah I love that.

Scott Benner Yeah. (1:01:48) Because I love that. (1:01:49) Because what I've learned is is that my dummy brain can't talk to it as well as it needs to. (1:01:55) So instead of jumping right into the task, I pre bolus the task with another task. (1:02:01) I go in and I go instead of just saying, like, go into these episodes and find out why I'm so great, like, you know, which I'm sure is how some people deserve that. (1:02:08) Instead of doing that, right, I say, here's my goal. (1:02:13) Here's what I think might be happening, but I first need you to read a couple of transcripts and tell me if I'm wrong. (1:02:20) And then it comes back and says, well, I think your impact might be this, this, and this. (1:02:25) I think we should look for these things. (1:02:26) And I go, okay. (1:02:27) Write me a prompt for you that will help you do that the best you can. (1:02:32) Like, that kind of stuff. (1:02:33) Like, I talk to it in, like, cleaner language, like or or, you know, more colloquial language like that. (1:02:38) And then it comes back, and it gives me the prompt. (1:02:40) And I go, okay. (1:02:41) Like, is there anything about this prompt that will lead us to and basically tell like, I don't I'm not looking for you to glaze me. (1:02:47) I'm not I'm not asking you to kiss my ass. (1:02:49) Like, I'm I'm I want real actual I want you to really think about human psychology and why things impact people and, you know, and and then I end up with this prompt. (1:02:59) And then the prompt does a really good job of pulling out ideas. (1:03:02) Now, where could I use that in the in the short term? (1:03:06) Probably social media. (1:03:07) Right? (1:03:07) Like, there's there's quotes in here as it's going through that are all, like, they're really valuable things for people living with diabetes. (1:03:14) I see each and every one of them. (1:03:15) But if you ask me to go, like, remember what I said and make a piece of social media about it, I can't do that. (1:03:21) And even if you ask me to go back and listen to the whole episode and jot down takeaways, like, I don't have the time for that either. (1:03:26) I would never get that accomplished. (1:03:28) I'm taking something that I already know helps people, and I'm finding a way to repurpose it to help different people. (1:03:35) And that's with that without this, that doesn't happen.

Sarah That has a lot of implications for diabetes in terms of also, I mean, there's a medical side on the, you know, the medical, the device, that really in the weeds side. (1:03:48) But then there's also the side of advocacy and communication about, you know, what what is this to, you know, a broad audience and then, you know, within the schools and, you know, within, you know, different settings. (1:04:02) So I think using AI for for those circumstances is also probably underutilized right now in terms of people saying, oh, you know, I've I'm doing a fundraiser, and I think this is important. (1:04:13) And, you know, I can maximize it much more easily if I use AI. (1:04:18) Or I don't really have the words to describe why I'm having you know, to describe a certain issue to the school nurses. (1:04:25) Can you help me put it into a way that they might understand better? (1:04:28) So I I think a lot of those kind of communication pieces are great use cases for AI too

Scott Benner Yeah.

Sarah And especially for the diabetes community.

Scott Benner Yeah. (1:04:36) Just in general, I think some of you are just not thinking about this the right way. (1:04:41) That's all. (1:04:42) Like, there's real ways to use this for yourself right now. (1:04:45) You just have to kind of like, you have to just step back and see how it works and how it thinks and how you talk and how you can do those things together to lead it to do the thing you want it to do. (1:04:57) Like, it's not just it that's what happens when people say, like, I asked it something that got it wrong. (1:05:01) I'm like, I would love to see what you wrote into that because I bet I bet you didn't have a chance in hell. (1:05:06) And it does get stuff wrong like we talked about. (1:05:08) Like, that that I understand But as you also have agency and you could read it and decide if that's if if if what it told you makes any sense or not. (1:05:16) I just think, like, using this as an example, in my heart, like, I don't know how to do this yet. (1:05:23) I haven't been able to teach myself the whole thing. (1:05:26) What I would like is an app where you can listen to the podcast, but where also, like, daily affirmations might pop up, like, that are just from contacts from the podcast. (1:05:37) I would love it if one day that app had you know, I know you can't do this because Facebook won't let the API out, but I would love it if the Facebook group just lived if it all lived together. (1:05:47) I don't imagine that's gonna happen. (1:05:49) I think the code would get crazy and and and it wouldn't work. (1:05:52) But I would just I would just like an app that you open up that I don't know. (1:05:56) When it opens, it says something to you that you would might find valuable or supportive about diabetes. (1:06:03) You swipe up, and there's the little app where you figure out your bolus for your day or something like that.

Sarah Right.

Scott Benner I just think that might be nice for people and, you know, a way for them to take a break or to be reminded of something because I hear that all the time from people. (1:06:18) Like, one of the things that somebody will say is, like, I already really know how to take care of my diabetes, but listening to the podcast keeps me, the way they tell me is, like, focused on it without being too focused on it. (1:06:31) So it's not front of mind, and and they're not always like, god. (1:06:35) I'm always thinking about my diabetes, but it's around just enough that they find themselves making good decisions. (1:06:40) And I wonder if, like, just having something pop up in front of you that says, like, you know, you get what you expect. (1:06:46) And, you know, if you expect a 01:30, you're probably gonna get a 01:30. (1:06:49) Setting you know, if your high alarm's set at 01:50 right now, try moving it

Sarah down. (1:06:53) Right.